AI Thumbnail Testing vs. Manual Testing

Your thumbnail can make or break your video's success. Whether you use AI or manual testing, the goal is the same: improve click-through rates (CTR) and maximize viewer engagement. Understanding CTR benchmarks helps set realistic goals for these tests. Here's a quick breakdown:

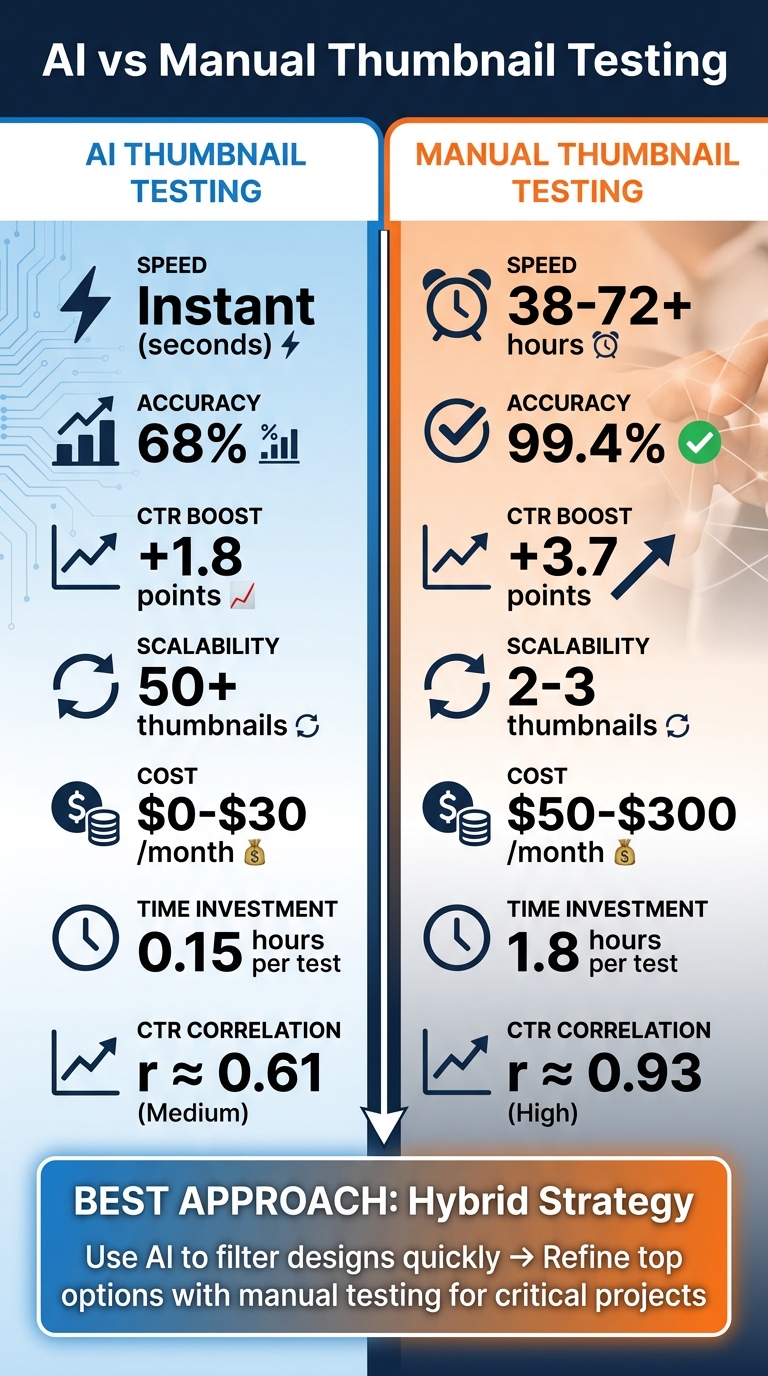

- AI Thumbnail Testing: Fast and scalable. AI predicts performance using algorithms, analyzing factors like contrast, face detection, and text readability. It works in seconds and is cost-effective ($0–$30/month). However, AI predictions align with actual results only 68% of the time.

- Manual Thumbnail Testing: Slower but more accurate. This method involves A/B testing thumbnails with real viewers, delivering a 99.4% reliability rate. It provides deeper insights but requires significant time (38–72+ hours) and effort. Costs range from $50 to $300/month.

Key Stats:

- AI boosts CTR by +1.8 points on average, while manual testing achieves +3.7 points.

- AI testing correlates with CTR at r ≈ 0.61, while manual testing reaches r ≈ 0.93.

- AI can test 50+ thumbnails pre-upload; manual testing typically handles 2–3 at a time.

Quick Comparison:

| Metric | AI Testing | Manual Testing |

|---|---|---|

| Speed | Instant (seconds) | 38–72+ hours |

| Accuracy | 68% | 99.4% |

| CTR Boost | +1.8 points | +3.7 points |

| Scalability | 50+ thumbnails | 2–3 thumbnails |

| Cost | $0–$30/month | $50–$300/month |

Best Approach: Use AI to quickly filter designs, then refine top options with manual testing for critical projects. This hybrid strategy balances speed and precision, saving time while improving results.

AI vs Manual Thumbnail Testing: Speed, Accuracy, and Cost Comparison

How to Compare AI and Manual Thumbnail Testing

Thumbnail performance plays a pivotal role in determining click-through rates (CTR). Here’s a closer look at how AI and manual thumbnail testing stack up against each other in terms of efficiency, accuracy, and results. Each method offers its own advantages and trade-offs, which can significantly influence your workflow and video performance.

AI testing is lightning-fast, delivering predictive scores for multiple thumbnails in mere seconds. This enables creators to evaluate dozens of designs before publishing . On the other hand, manual A/B testing takes considerably longer - between 38.2 and 120 hours - to gather enough data for statistically significant results . This time disparity is crucial for creators working under tight deadlines or managing a heavy content schedule.

When it comes to accuracy, the picture becomes more nuanced. AI tools tend to correlate with final CTR at around r ≈ 0.61, while live A/B testing demonstrates a much stronger correlation of approximately r ≈ 0.93. As Dr. Arjun Mehta, Director of Algorithmic Research at StreamLabs Analytics, points out:

"The biggest misconception is treating AI thumbnail scoring as a replacement for experimentation. It's a triage tool - not a verdict."

Performance metrics further highlight the differences. Over a year-long study involving 317 mid-tier channels, manual testing achieved a +3.7 percentage point increase in CTR, compared to a +1.8 point gain from AI predictions. AI, however, shines in scalability, allowing creators to test over 50 thumbnails pre-upload, while manual testing typically handles only 2–3 variants per live test. Additionally, AI requires just 0.15 hours of setup time, a stark contrast to the 1.8 hours needed for manual testing tasks like design, scheduling, and monitoring.

Efficiency: Time and Effort Required

AI testing is ideal for high-volume creators, offering instant feedback on multiple thumbnails and quickly eliminating weak designs .

Conversely, manual A/B testing demands more time and effort. On average, it takes 38.2 hours to reach a decision, with the 90th percentile stretching to 62 hours. Robust statistical conclusions also require 1,000–2,000 impressions per variant .

A hybrid approach can improve efficiency. For instance, in January 2024, the tech review channel TechLens (412,000 subscribers) used AI to narrow down 12 concepts to 4 finalists for manual testing. This reduced their median iteration time from 71.2 hours to 22.6 hours - a 68% improvement.

Accuracy: Data Analysis vs. Human Judgment

AI testing relies on historical data and pattern recognition to predict thumbnail performance. It’s particularly adept at identifying objective thumbnail mistakes to avoid, such as poor contrast or unreadable text. However, it often misses the subtler, behavioral factors that influence viewer engagement .

Manual testing, by contrast, measures actual viewer behavior in real-world scenarios. It accounts for variables like competitive positioning, emotional appeal, and changing audience preferences. This ability to connect context with outcomes makes manual testing more reliable in distinguishing correlation from causation .

For example, smaller channels may find AI predictions less reliable. In niche cases, such as channels with fewer than 50,000 subscribers, AI’s accuracy can drop to r = 0.44. A case in point: the true crime channel "Case File Archives" (142,000 subscribers) discovered that while AI favored thumbnails with shadowed faces, a 72-hour A/B test revealed their audience preferred clear document close-ups. This adjustment boosted their CTR from 4.3% to 6.9% within six weeks.

Results: Performance Metrics and Optimization

Both methods yield different outcomes in real-world performance. AI predictions correlate with CTR at r ≈ 0.55–0.65, while manual A/B tests achieve stronger correlations of r ≈ 0.88–0.95. Manual testing also tends to increase CTR by 20–40% .

Additionally, manual testing provides insights beyond CTR, such as how thumbnails affect watch time, audience retention, and overall satisfaction. These secondary metrics are becoming increasingly important with YouTube’s evolving algorithms. For instance, the platform’s 2026 "Satisfaction-Weighted Discovery" update penalizes misleading thumbnails that lead to quick viewer drop-offs, potentially reducing view counts by up to 30%.

Cost is another factor to consider. AI tools are relatively inexpensive, costing between $0 and $30 per month and requiring minimal setup. In contrast, manual testing platforms range from $50 to $300+ per month and demand more hands-on involvement. A study by NoteLM.ai found that while 72-hour manual tests achieved 86% accuracy, AI-powered tools required 14 days to reach comparable reliability.

sbb-itb-b59debf

AI Thumbnail Testing: Features and Benefits

AI-driven thumbnail testing is changing the game for YouTube creators, especially those juggling tight schedules or managing multiple channels. Instead of spending hours designing and testing thumbnails manually, creators can now generate, test, and refine them before their videos even go live.

Automated Thumbnail Creation and Variant Testing

AI tools make thumbnail creation lightning-fast, cutting the process from hours to mere seconds. Creators can instantly generate 5–12 variations and analyze them using AI-powered tools that evaluate critical factors like contrast, face detection, text clarity, and color saturation. These tools even flag potential design issues before you spend time on live testing.

The efficiency is remarkable: AI testing setups are 12 times faster (0.15 hours compared to 1.8 hours) and can screen over 50 variants pre-upload. In contrast, traditional methods typically allow for testing just 2–3 versions at a time. Features like automated background removal and face-focused positioning make subjects pop, with face-focused thumbnails achieving a 38% higher click-through rate.

This automation sets the stage for large-scale testing.

Scalability for High-Volume Testing

For creators producing a high volume of content, maintaining quality across numerous thumbnails can feel overwhelming. AI tools simplify this by allowing quick adjustments to elements like text, backgrounds, and subjects. With AI-assisted templates, you can create 10 high-quality thumbnail variations in just 15 minutes.

These tools also ensure consistent branding across hundreds of thumbnails - a task that's nearly impossible to manage manually under tight deadlines. They go a step further by intelligently adapting images to different aspect ratios for platforms like YouTube, TikTok, and Instagram, avoiding the pitfalls of simple cropping. Plus, with costs ranging from $0 to $30 per month, AI tools are far more affordable than manual A/B testing platforms, which can cost $50 to $300 or more monthly.

Using ThumbnailCreator in Your Workflow

ThumbnailCreator takes these advanced features and integrates them seamlessly into your creative process. With pre-designed templates, you don’t need design expertise to produce professional-looking thumbnails in seconds. Tools like face swapping let you test different expressions and subjects without scheduling new shoots, while text editing ensures your headlines stay readable on mobile screens.

The object swapping feature allows for quick changes to backgrounds and other elements, making it easy to fine-tune your designs. ThumbnailCreator also acts as a diagnostic tool, flagging issues like unreadable text or low contrast before you commit to live A/B testing. By generating 4–5 versions of each concept, you can select the one most likely to spark curiosity or emotion. Then, validate your top picks using YouTube's free "Test & Compare" feature to gather real viewer data.

Manual Thumbnail Testing: Strengths and Limitations

Manual thumbnail testing gives you full control over every design detail. While it takes more time and effort than AI-based methods, it allows for creative possibilities that automated tools and AI thumbnail generation can't always match.

Creative Freedom and Customization

Designing thumbnails manually means you're not tied to templates or pre-set models. You can create intricate designs with custom effects and typography that align perfectly with your brand's identity. This approach is especially valuable for flagship videos, brand overhauls, or high-stakes collaborations where top-notch quality is a must.

Manual testing also accounts for contextual nuances that AI often overlooks. Humans can notice how factors like scroll speed on mobile devices affect thumbnail visibility or how performance varies between the Home feed and Search results. For niche audiences, these details are critical - true crime enthusiasts may respond to forensic imagery, while finance viewers might prefer technical diagrams. Such specific triggers often fall outside the scope of AI training data.

When statistical significance is reached, manual A/B testing achieves a 99.4% reliability rate, setting the standard for accuracy. Even shorter manual tests, like those running for 72 hours, can achieve an 86% accuracy rate in identifying the best-performing thumbnail. However, this precision comes with a trade-off.

Time-Intensive Process

Creating a single thumbnail manually can take 3 to 4 hours, and running a proper A/B test adds even more time. On average, manual testing requires 1.8 hours of creator time per test, compared to just 0.15 hours for AI-powered methods. You'll also need to manually swap thumbnail variants at specific intervals - a tedious task that can introduce timing biases.

The process demands constant attention to YouTube Studio analytics, with manual tracking of metrics like CTR and impressions. Unlike automated systems that deliver insights in a matter of hours or days, manual testing often takes days to weeks to yield reliable results. These time demands also limit the number of variables you can test effectively.

Smaller-Scale Testing and Subjective Feedback

Because manual testing is so labor-intensive, it typically handles only 1–2 variables at a time, whereas AI systems can test multiple elements simultaneously. This creates bottlenecks, making it challenging to experiment with different hooks across a large video library.

Another drawback is the risk of relying on subjective judgment. Personal preferences can lead you to dismiss designs that might actually perform well. To minimize this, focus on testing one element at a time - such as a facial expression or a text hook - and allow at least 48–72 hours before deciding on a winner. Keep detailed records of each test's start and end dates, impressions, clicks, and CTR to build a data-driven playbook tailored to your channel.

AI Thumbnail Testing vs. Manual Testing: Side-by-Side Comparison

This comparison highlights the key differences between AI-driven and manual thumbnail testing, breaking down the metrics that matter most.

When it comes to time, AI testing requires just 0.15 hours of active effort per test, compared to the 1.8 hours needed for manual testing - a difference of 12× in active labor. However, accuracy is where manual testing shines, achieving 99.4% reliability, while AI predictions correctly identify the winning thumbnail only 68% of the time.

The results reflect these differences. AI-predicted winners yield an average CTR lift of +1.8 percentage points, whereas manual testing delivers a more substantial lift of +3.7 percentage points. Additionally, the correlation with the final 7-day CTR is stronger for manual testing (r ≈ 0.93) compared to AI scoring (r ≈ 0.61).

Comparison Table

| Metric | AI Thumbnail Testing | Manual A/B Testing |

|---|---|---|

| Decision Speed | Instant (seconds) | 38.2 to 72+ hours |

| Time Investment | 0.15 hours per test | 1.8 hours per test |

| Reliability | 68% accuracy | 99.4% accuracy |

| CTR Correlation | Medium (r ≈ 0.61) | High (r ≈ 0.93) |

| Average CTR Lift | +1.8 percentage points | +3.7 percentage points |

| Scalability | Test 50+ variants | Limited to 2–3 variants |

| Data Source | Historical benchmarks | Real-time audience behavior |

| Monthly Cost | $0–$30 | $50–$300+ |

This side-by-side breakdown underscores the trade-offs between A/B testing vs. gut feeling when balancing speed and precision. AI testing is ideal for quickly screening a high volume of designs or running initial assessments. On the other hand, manual testing is better suited for high-priority content where accuracy and impact are critical. For flagship projects, the extra time and effort invested in manual testing often pay off. Meanwhile, AI provides a practical solution for faster iterations and eliminating weaker designs.

How to Combine AI and Manual Testing Methods

Blending AI and manual testing can streamline your workflow and deliver better results. The key isn't to choose one over the other but to compare thumbnail strategies and use both in tandem. AI can quickly generate multiple design options, while manual adjustments add the polish needed for top-tier performance. For example, you might start with AI to create 5–10 design variations focusing on hooks, color schemes, and layouts. Then, manually refine the top 1–2 designs by tweaking details like kerning, facial expressions, or brand elements. Finally, validate their effectiveness through data-driven A/B testing.

Hybrid Testing Strategies

Start by generating 3–6 AI-driven designs based on a concise creative brief. Preview these at 20–30% zoom to mimic how they’ll appear on mobile devices, where most viewers consume content. After narrowing down your favorites, refine them manually to fine-tune elements like kerning and branding. This approach can dramatically cut design time - reducing it from about 2 hours to just 14 minutes per thumbnail.

Take the example of the true crime channel "Case File Archives" (142,000 subscribers) in October 2023. They used AI to eliminate low-contrast drafts and then conducted 72-hour A/B tests on the remaining options. While AI leaned toward "mystery" designs, human viewers preferred thumbnails with clear document close-ups. This adjustment raised their average CTR from 4.3% to 6.9% in just six weeks.

Once you've established a hybrid workflow, the next step is to fine-tune your testing process for even better results.

Setting Up Effective A/B Tests

The duration of your tests is critical. For directional insights, run tests for at least 48–72 hours. For more reliable data, extend the testing period to 14 days or until each variant reaches at least 1,000 impressions. A study by the NoteLM Team in January 2026, which analyzed 127 controlled tests across 15 YouTube channels, revealed that 70% of tests identified a clear winner, with an average CTR improvement of 37%. The most dramatic increase - a 74% boost - came from switching a neutral facial expression to a surprised one.

To get accurate results, test one major element at a time. For instance, you might adjust the facial expression while keeping other factors, like the video title, constant. Once you identify a winning element, use it as a baseline for further tests to refine other aspects.

With your A/B tests in motion, the focus shifts to tracking performance metrics.

Monitoring Key Metrics

CTR (Click-Through Rate) should be your primary focus, but don't overlook Average View Duration (AVD) to ensure your thumbnails aren’t misleading. YouTube's "Test & Compare" tool prioritizes watch time share over clicks, helping you identify thumbnails that best represent your content. For meaningful insights, aim for at least 1,000–2,000 impressions per test, and for definitive results, target 5,000+ impressions. Even a small CTR difference - like 0.5% - can significantly impact performance when scaled across millions of impressions.

Additionally, monitor engagement metrics like likes, comments, and shares. These can provide a broader perspective on the quality of the audience your thumbnails attract. This combination of metrics ensures you're not just chasing clicks but building meaningful viewer engagement.

Conclusion: Choosing the Right Approach

Deciding between AI and manual testing isn't an either-or situation - it’s about finding the right fit for your needs. AI tools shine when speed is essential, cutting down design time from hours to just 30 seconds and quickly eliminating glaring issues like poor contrast or illegible text. On the other hand, manual A/B testing delivers much higher reliability, with live testing correlating strongly with final CTR (r = 0.93) compared to AI’s weaker correlation (r = 0.61).

For creators producing multiple videos each week or working within tight budgets, AI testing offers the efficiency and scale needed to stay ahead. Tools like ThumbnailCreator can generate various design options instantly, helping you test ideas before committing valuable views. However, manual testing should be reserved for high-stakes situations - launching a new series, creating sponsored content, or targeting competitive keywords where precision and brand alignment are critical.

A smart strategy blends the strengths of both approaches. For example, using AI to create 6–8 thumbnail concepts and manually testing the top 2–3 can drastically reduce iteration time by optimizing your thumbnail workflow. TechLens applied this hybrid model and cut their process by 68%, while boosting their CTR from +11.3% to +18.7%.

"AI thumbnail scores are like weather forecasts - they tell you what might happen based on patterns. Human A/B tests are like soil sensors: they tell you what is happening, right now, in your garden." - Dr. Lena Park, Behavioral Media Scientist, MIT Comparative Media Lab

The key is combining speed with accuracy. Think of AI as a first filter, helping you avoid wasting views on underperforming designs. Manual testing then reveals what truly connects with your audience. Creators who balance both methods see CTR improvements of 20–40%. By aligning your testing approach with your content goals, you can maximize your impact and keep viewers engaged.

FAQs

When should I use AI testing vs. manual A/B testing?

AI testing is excellent for quickly creating thumbnail variations, estimating click-through rates (CTR), and fine-tuning content for mobile audiences. It's a time-saver, making it perfect for fast-paced experiments or brainstorming new ideas.

On the other hand, manual A/B testing shines when you need detailed, long-term insights, particularly for high-priority campaigns. This approach involves testing elements over an extended period to gauge audience preferences and perfect designs that reflect your brand's identity.

How many impressions are needed for a reliable A/B test?

For an effective A/B test, ensure each variant gets at least 5,000 impressions or run the test for at least 7 days - whichever happens first. This approach helps you collect enough data to make accurate comparisons and reach reliable conclusions.

How do I combine AI filtering with YouTube’s Test & Compare?

To merge AI filtering with YouTube’s Test & Compare, begin by using AI-driven tools like ThumbnailCreator to generate several high-quality thumbnail options in no time. Once you have these variations, upload them to YouTube’s Test & Compare feature, which allows you to run A/B tests on up to three thumbnails simultaneously. This method combines the speed of AI with YouTube’s analytics to pinpoint the thumbnails that perform the best.